Academic Enhancement at the University of South Alabama leads special initiatives, like the Quality Enhancement Plan (QEP), to improve student learning and student success. We collaboratively plan, implement, and evaluate the QEP, and we provide ongoing support for institutional planning and evaluation and for SACSCOC accreditation. We are excited to launch our LevelUP: Uniquely Prepared for What Comes Next QEP this year.

Click the links to below to learn more about our current LevelUP QEP (2023 - 2028)

or see archives of our previous Team USA QEP (2013 - 2018).

Latest News

Celebrating Year 2 Successes of the LevelUP QEP

Celebrating Year 2 Successes of the LevelUP QEP

Monday - December 1, 2025

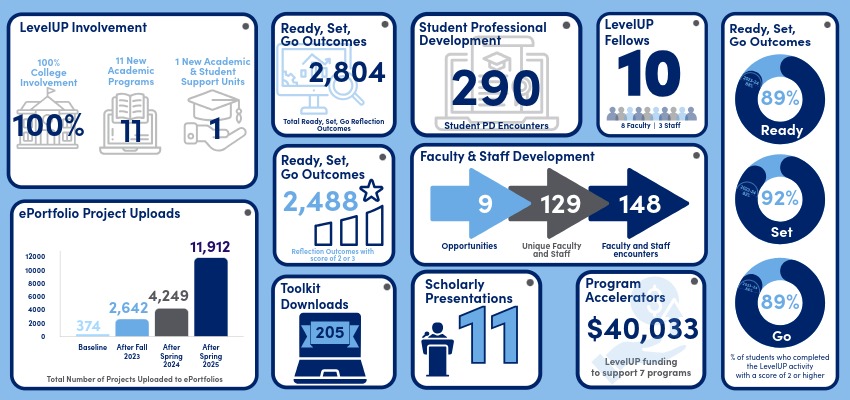

The Office of Institutional Effectiveness is pleased to share key accomplishments from the second year of its LevelUP: Uniquely Prepared for What Comes Next Quality Enhancement Plan (QEP), continuing momentum in supporting students as they prepare for their next steps.

Read more

LevelUP Program Accelerator Funding Competition 2025 Award Winners Announced

LevelUP Program Accelerator Funding Competition 2025 Award Winners Announced

Monday - October 13, 2025

The Office of Institutional Effectiveness is proud to announce the recipients of the 2025 LevelUP Program Accelerator Awards, providing funding for innovative projects that help students connect their academic experiences to career readiness, reflection, and life after graduation. Selected proposals demonstrate creative approaches to enhancing student learning and engagement aligned with the National Association of Colleges and Employers (NACE) Career Readiness Competencies.

Read more

Institutional Effectiveness Welcomes Third Cohort of LevelUP Fellows

Institutional Effectiveness Welcomes Third Cohort of LevelUP Fellows

Monday - October 6, 2025

Through a competitive selection process, the Office of Institutional Effectiveness has announced the third cohort of LevelUP Fellows for the 2025-2026 academic year. The onboarding of this new group marks another milestone in the ongoing implementation of the LevelUP Quality Enhancement Plan (QEP), focused on preparing South Alabama students to be uniquely equipped for their next steps. The 2025 - 2026 Fellows represent eight academic programs and two student support areas, reflecting the cross-campus collaboration at the heart of the LevelUP QEP.

Read more