Dr. Jesse Ables Receives $155,000 NSF Grant for Explainable Intrusion Detection Research

Posted on August 29, 2025 by Keith Lynn

Dr. Jesse Ables has been awarded a $155,000 grant from the National Science Foundation

(NSF) under the Computer and Information Science and Engineering Research Initiation

Initiative (CRII). His project was specifically accepted for the NSF Secure and Trustworthy

Computing (SaTC) CRII.

His funded project, titled “Temporal Eclectic Rule Extraction (TERE) and Rule Extraction

Optimization Strategies for Explainable Intrusion Detection Systems (X-IDS),” combines

two critical areas of cybersecurity and artificial intelligence: Intrusion Detection

Systems (IDS), which safeguard computer networks, and Explainable Artificial Intelligence

(XAI).

“Right now, many powerful AI systems are like a ‘black box’—they can detect issues in a network, such as hacking attempts or virus activity, but we often don’t know how or why they reached that conclusion. This makes it harder to fully trust their results. My research is focused on opening up that black box,” explained Dr. Ables.

Dr. Ables is developing a new algorithm called Temporal Eclectic Rule Extraction (TERE). Its goal is to create "white-box" explanations for a specific type of powerful AI called a Recurrent Neural Network (RNN). Essentially, the algorithm will allow us to see the reasoning behind an RNN's predictions.

In addition, the research will examine optimization strategies for eclectic rule extraction, as this process currently exhibits poor runtime scalability.

In short: “I'm building a way to make advanced network security systems not only powerful, but also transparent and understandable. This will help us better defend against cyber threats by knowing exactly why and how an AI identifies a threat.”

Dr. Ables’ interest in Explainable AI began during his Ph.D. research, where his dissertation challenged prevailing notions of what constitutes a trustworthy explanation. He argues that most current XAI solutions are neither truly explainable nor inherently trustworthy, and that new methods must prioritize both qualities from the ground up. His work explores philosophical and technical questions such as:

- How do we generate useful explanations?

- What form will these explanations take (visual, textual, resource-backed)?

- Who are the explanations for?

- How will we use the explanations?

-

Scholarship and Awards Luncheon

The School of Computing celebrated excellence and achievement during i...

May 14, 2026 -

SoC hosted an industry discussion of successful alumni to help students navigate the transition into the workforce

SoC hosted an industry discussion of successful alumni to help student...

March 27, 2026 -

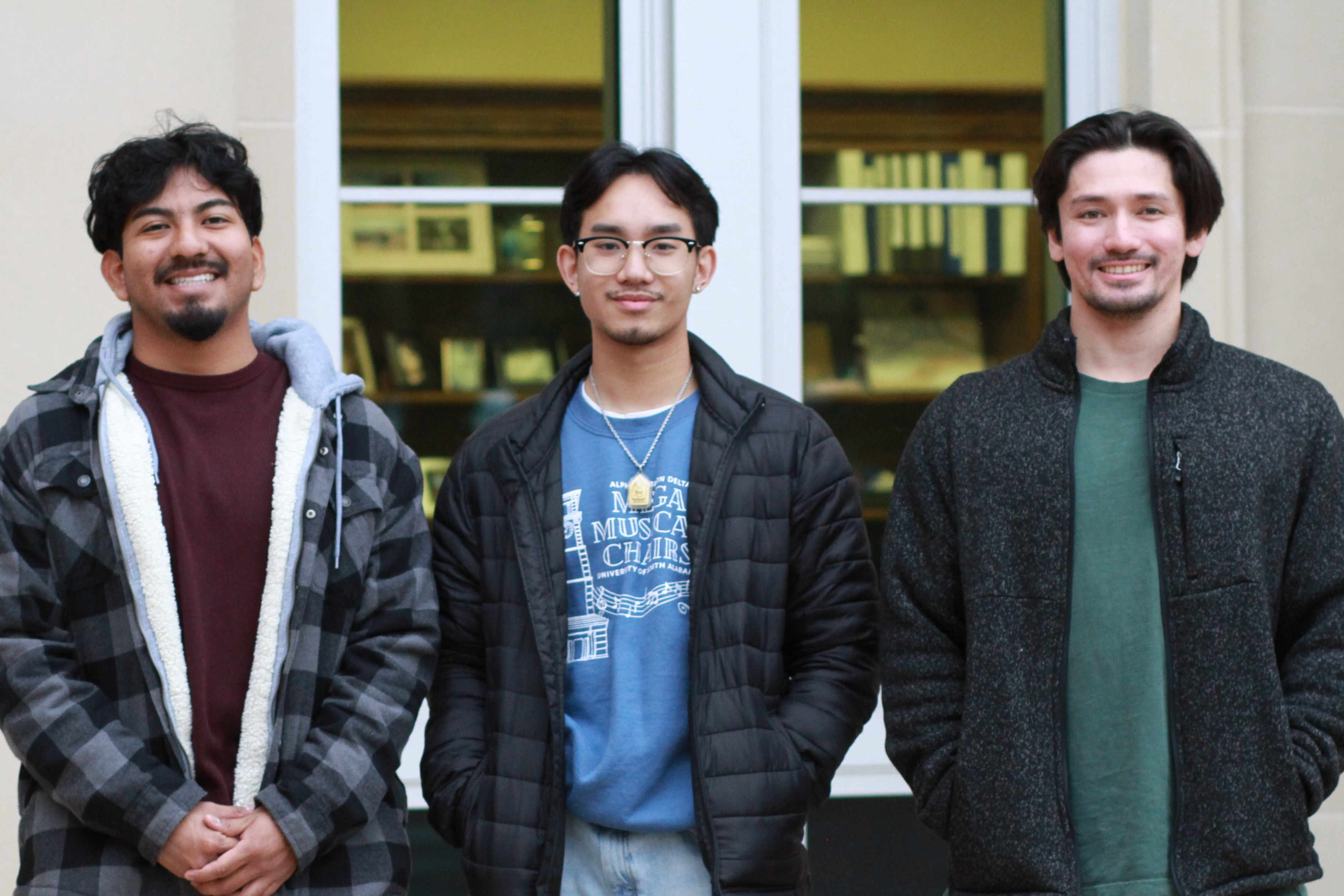

SoC Students Complete Impactful Internships with USA's CSC Networking Team

SoC Students Complete Impactful Internships with USA's CSC Networ...

December 16, 2025 -

Alumnus Jay Maru Inspires Seniors to Lead in an AI-Powered Future

The School of Computing recently welcomed alumnus Jay Maru, founder of...

October 7, 2025